1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48

49

50

51

52

53

54

55

56

57

58

59

60

61

62

63

64

65

66

67

68

69

70

71

72

73

74

75

76

77

78

79

80

81

82

83

84

85

86

87

88

89

90

91

92

93

94

95

96

97

98

99

100

101

102

103

104

105

106

107

108

109

110

111

112

113

114

115

116

117

118

119

120

121

122

123

124

125

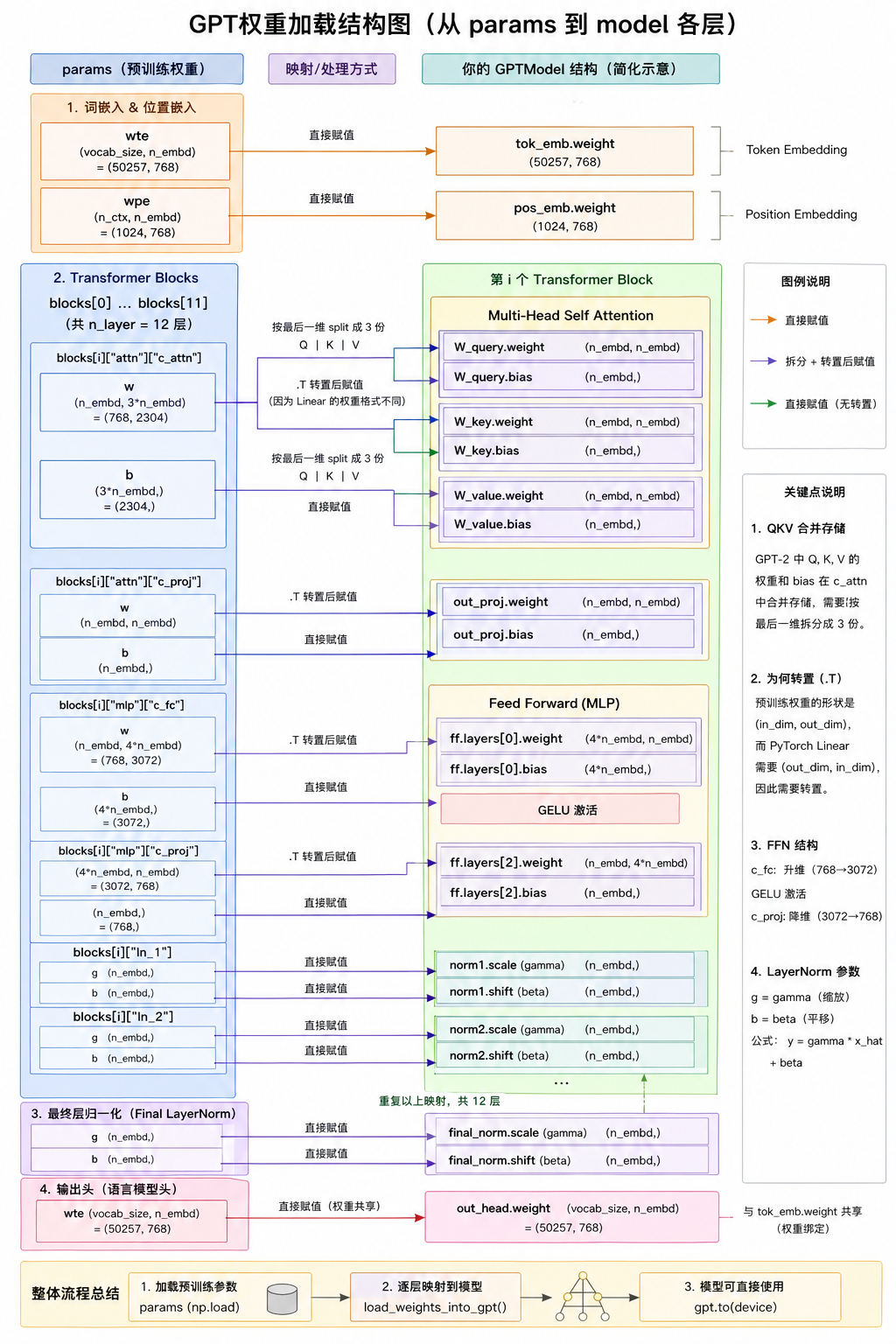

| from gpt_download import download_and_load_gpt2

settings,params=download_and_load_gpt2(model_size="124M",models_dir="gpt2")

"""

权重已加载到Python中,现在将权重传入实例中

"""

model_configs = {

"gpt2-small (124M)": {"emb_dim": 768, "n_layers": 12, "n_heads": 12},

"gpt2-medium (355M)": {"emb_dim": 1024, "n_layers": 24, "n_heads": 16},

"gpt2-large (774M)": {"emb_dim": 1280, "n_layers": 36, "n_heads": 20},

"gpt2-xl (1558M)": {"emb_dim": 1600, "n_layers": 48, "n_heads": 25},

}

model_name="gpt2-small (124M)"

NEW_CONFIG=GPT_CONFIG_124M.copy()

NEW_CONFIG.update(model_configs[model_name])

NEW_CONFIG.update({"context_length": 1024, "qkv_bias": True})

gpt=GPTModel(NEW_CONFIG)

gpt.eval();

def assign(left, right):

if left.shape != right.shape:

raise ValueError(f"Shape mismatch. Left: {left.shape}, Right: {right.shape}")

return torch.nn.Parameter(torch.tensor(right))

import numpy as np

def load_weights_into_gpt(gpt, params):

gpt.pos_emb.weight = assign(gpt.pos_emb.weight, params['wpe'])

gpt.tok_emb.weight = assign(gpt.tok_emb.weight, params['wte'])

for b in range(len(params["blocks"])):

q_w, k_w, v_w = np.split(

(params["blocks"][b]["attn"]["c_attn"])["w"], 3, axis=-1)

gpt.trf_blocks[b].att.W_query.weight = assign(

gpt.trf_blocks[b].att.W_query.weight, q_w.T)

gpt.trf_blocks[b].att.W_key.weight = assign(

gpt.trf_blocks[b].att.W_key.weight, k_w.T)

gpt.trf_blocks[b].att.W_value.weight = assign(

gpt.trf_blocks[b].att.W_value.weight, v_w.T)

q_b, k_b, v_b = np.split(

(params["blocks"][b]["attn"]["c_attn"])["b"], 3, axis=-1)

gpt.trf_blocks[b].att.W_query.bias = assign(

gpt.trf_blocks[b].att.W_query.bias, q_b)

gpt.trf_blocks[b].att.W_key.bias = assign(

gpt.trf_blocks[b].att.W_key.bias, k_b)

gpt.trf_blocks[b].att.W_value.bias = assign(

gpt.trf_blocks[b].att.W_value.bias, v_b)

gpt.trf_blocks[b].att.out_proj.weight = assign(

gpt.trf_blocks[b].att.out_proj.weight,

params["blocks"][b]["attn"]["c_proj"]["w"].T)

gpt.trf_blocks[b].att.out_proj.bias = assign(

gpt.trf_blocks[b].att.out_proj.bias,

params["blocks"][b]["attn"]["c_proj"]["b"])

gpt.trf_blocks[b].ff.layers[0].weight = assign(

gpt.trf_blocks[b].ff.layers[0].weight,

params["blocks"][b]["mlp"]["c_fc"]["w"].T)

gpt.trf_blocks[b].ff.layers[0].bias = assign(

gpt.trf_blocks[b].ff.layers[0].bias,

params["blocks"][b]["mlp"]["c_fc"]["b"])

gpt.trf_blocks[b].ff.layers[2].weight = assign(

gpt.trf_blocks[b].ff.layers[2].weight,

params["blocks"][b]["mlp"]["c_proj"]["w"].T)

gpt.trf_blocks[b].ff.layers[2].bias = assign(

gpt.trf_blocks[b].ff.layers[2].bias,

params["blocks"][b]["mlp"]["c_proj"]["b"])

gpt.trf_blocks[b].norm1.scale = assign(

gpt.trf_blocks[b].norm1.scale,

params["blocks"][b]["ln_1"]["g"])

gpt.trf_blocks[b].norm1.shift = assign(

gpt.trf_blocks[b].norm1.shift,

params["blocks"][b]["ln_1"]["b"])

gpt.trf_blocks[b].norm2.scale = assign(

gpt.trf_blocks[b].norm2.scale,

params["blocks"][b]["ln_2"]["g"])

gpt.trf_blocks[b].norm2.shift = assign(

gpt.trf_blocks[b].norm2.shift,

params["blocks"][b]["ln_2"]["b"])

gpt.final_norm.scale = assign(gpt.final_norm.scale, params["g"])

gpt.final_norm.shift = assign(gpt.final_norm.shift, params["b"])

gpt.out_head.weight = assign(gpt.out_head.weight, params["wte"])

load_weights_into_gpt(gpt, params)

gpt.to(device);

torch.manual_seed(123)

token_ids = generate(

model=gpt,

idx=text_to_token_ids("Every effort moves you", tokenizer).to(device),

max_new_tokens=25,

context_size=NEW_CONFIG["context_length"],

top_k=50,

temperature=1.5

)

print("Output text:\n", token_ids_to_text(token_ids, tokenizer))

|